Broadcom Predicts AI Chip Sales Will Surge Past $100 Billion

Broadcom Predicts AI Chip Sales Will Surge Past $100 Billion

By

David Goldfarb

Last updated:

March 5, 2026

First Published:

March 5, 2026

Photo: Bloomberg.com

Artificial Intelligence Demand Powers Broadcom’s Growth

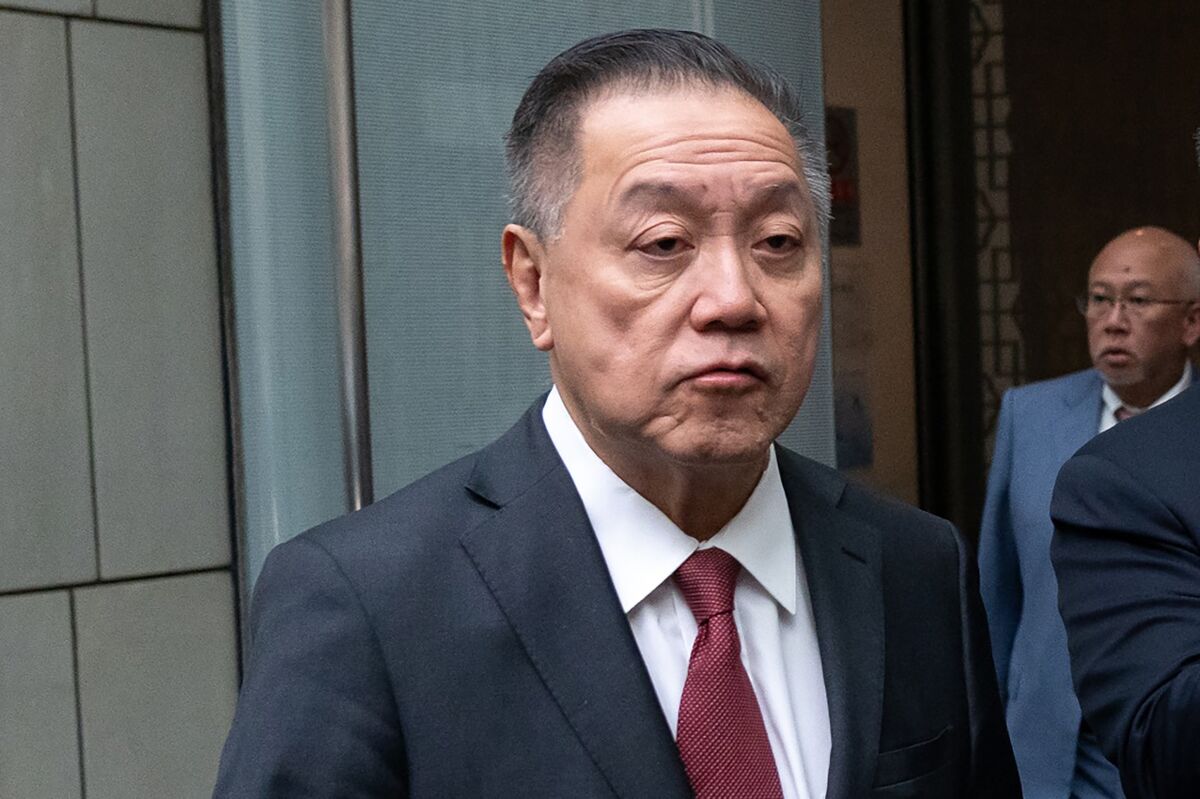

Broadcom is positioning itself at the center of the global artificial intelligence infrastructure boom, with CEO Hock Tan forecasting that the company’s AI semiconductor business could generate well above $100 billion in annual revenue in the coming years.

The bullish outlook came after the semiconductor and infrastructure software giant reported stronger-than-expected results for its fiscal first quarter. During the company’s earnings call with analysts, Tan emphasized that demand for custom AI chips is expanding rapidly as major technology companies invest heavily in building specialized processors to power next-generation artificial intelligence systems.

According to Tan, the company is seeing increasing demand from large hyperscale customers that rely on Broadcom’s expertise to design and develop custom silicon for AI workloads. These partnerships are expected to drive massive growth in the coming years as data centers expand their computing power to support generative AI models, machine learning platforms, and high-performance cloud infrastructure.

Strong Earnings Highlight the AI Momentum

Broadcom’s financial results reflect the strength of the AI-driven semiconductor market. In the first quarter, the company reported total revenue of $19.3 billion, representing a 29 percent increase compared with the same period a year earlier.

The most significant driver of that growth was the company’s artificial intelligence semiconductor business. AI-related revenue more than doubled year over year, reaching $8.4 billion during the quarter. Broadcom now expects that figure to rise further, projecting approximately $10.2 billion in AI semiconductor revenue for the current quarter.

The strong results exceeded Wall Street expectations and reinforced investor confidence in Broadcom’s role as a key supplier in the AI ecosystem. Following the earnings announcement and Tan’s optimistic outlook, Broadcom shares climbed more than 5 percent in after-hours trading.

Custom Silicon Becomes the Next Frontier

A major reason behind Broadcom’s accelerating growth is its involvement in helping technology companies design custom AI processors. Rather than relying solely on off-the-shelf chips, many leading tech firms are now building their own accelerators tailored for specific AI workloads.

Broadcom plays a crucial role in this process by assisting customers in transforming complex chip designs into functioning silicon. The company provides engineering support, chip architecture expertise, and backend development before those designs are manufactured at advanced semiconductor fabrication facilities.

Once the designs are finalized, production typically takes place at specialized chip foundries such as Taiwan Semiconductor Manufacturing Company, which operates some of the most advanced fabrication plants in the world.

This collaborative model has positioned Broadcom as a critical partner for several of the largest technology companies investing in artificial intelligence infrastructure.

Tech Giants Driving Massive AI Infrastructure Investments

Tan revealed that Broadcom is currently working with six major customers on custom AI silicon programs. Among the most prominent are some of the world’s largest technology firms, including Google, Meta, Anthropic, and OpenAI. Additional partnerships are believed to involve Fujitsu and ByteDance.

These companies are building highly specialized processors to power large-scale AI data centers capable of training and running increasingly complex models. The custom chips are designed to improve performance, reduce energy consumption, and optimize computing power for machine learning workloads.

Google was one of the earliest pioneers in this strategy. The company began developing its own tensor processing units in 2015 with support from Broadcom. These AI chips were initially used internally but were later made available to cloud customers beginning in 2018.

Since then, Google’s AI hardware ecosystem has expanded significantly, supporting customers such as Apple and AI research company Anthropic. Broadcom expects the next generation of Google’s custom chips to drive even stronger demand when they arrive in future AI infrastructure upgrades.

Meta’s AI Chip Strategy Continues to Evolve

Meta is also expanding its efforts to develop proprietary AI hardware. The social media giant has been working with Broadcom on its MTIA accelerator program, which is designed to power recommendation systems, generative AI tools, and data center workloads.

Although some analysts have previously questioned whether Meta would continue investing heavily in custom silicon, Tan reassured investors that the MTIA development roadmap remains active.

According to industry reports, Meta is also exploring ways to integrate Google’s tensor processing units into certain workloads, highlighting how major technology firms are experimenting with multiple hardware strategies to scale AI computing power.

Capacity Constraints Still Challenge the Industry

Despite strong demand, the global semiconductor industry continues to face several structural challenges. One of the biggest bottlenecks involves the supply of high bandwidth memory, a specialized type of memory essential for AI accelerators.

High bandwidth memory enables processors to move massive amounts of data at extremely high speeds, which is critical for training and running large AI models. However, production of this component remains limited, creating supply shortages across the AI hardware sector.

In addition to memory constraints, advanced chip manufacturing and packaging capacity remains tight. Producing cutting-edge processors requires extremely sophisticated fabrication technologies, and only a small number of facilities worldwide are capable of handling such production.

These capacity limits have created intense competition among technology companies seeking to secure manufacturing slots for their next-generation AI chips.

Understanding the $100 Billion AI Opportunity

During the earnings call, analysts pressed Tan for more details about how Broadcom expects AI semiconductor revenue to surpass $100 billion annually.

One estimate presented during the discussion suggested that major AI customers could collectively deploy several gigawatts of computing capacity across their data centers. For example, analysts estimated roughly three gigawatts of demand from Anthropic and another three gigawatts from Google, along with at least two gigawatts from Meta and approximately one gigawatt from OpenAI.

Tan indicated that these projections were broadly aligned with Broadcom’s internal expectations, although the exact revenue generated per gigawatt can vary significantly depending on chip design complexity and deployment scale.

The underlying message from Broadcom’s leadership was clear: demand for AI infrastructure is expanding rapidly, and the scale of computing power required by next-generation AI systems is far larger than previously anticipated.

Broadcom’s AI Portfolio Extends Beyond Accelerators

While custom AI processors are a major component of the company’s strategy, Broadcom’s AI business encompasses a broader set of technologies used in modern data centers.

The company develops networking chips, data processing units, digital signal processors, and advanced switching technologies that allow massive data flows between servers and GPUs. These components are essential for building high-performance AI clusters that can handle massive training workloads.

Industry analysts note that when Broadcom refers to its AI semiconductor opportunity, the figure includes all of these technologies rather than just accelerator chips.

As artificial intelligence becomes more deeply integrated into cloud services, enterprise computing, and consumer applications, demand for these supporting components is expected to grow alongside the processors themselves.

The Expanding AI Infrastructure Race

The rapid expansion of artificial intelligence systems is triggering one of the largest infrastructure buildouts in the history of computing. Technology giants are investing tens of billions of dollars annually to build data centers filled with specialized hardware capable of supporting AI training and inference workloads.

Broadcom’s growing involvement in custom chip design and AI networking infrastructure places it in a strategic position to benefit from this trend.

If Tan’s projections prove accurate, the company could become one of the biggest financial beneficiaries of the AI revolution as hyperscale data center operators continue scaling their computing capacity at an unprecedented pace.

For investors and industry observers, Broadcom’s outlook underscores a broader reality: the artificial intelligence boom is still in its early stages, and the demand for powerful, customized chips is likely to grow dramatically in the years ahead.

Popular articles

Subscribe to unlock premium content

Electric Dreams on Demand

Tokyo’s Futuristic Gardens of Prestige

Elevating Perspectives with Drone Photography

Electric Dreams on Demand

Tokyo’s Futuristic Gardens of Prestige

Electric Dreams on Demand

.webp)